Labelbox

I joined Labelbox as the first designer when the team was eight people. Labelbox had been around for just less than a year and had found early product-market-fit with their image-labeling product targeting machine learning teams.

Customers were pulling the product out of our hands, and there were a lot of ways we could expand. But given the limited resources of an early-stage startup, which of the options should we pursue?

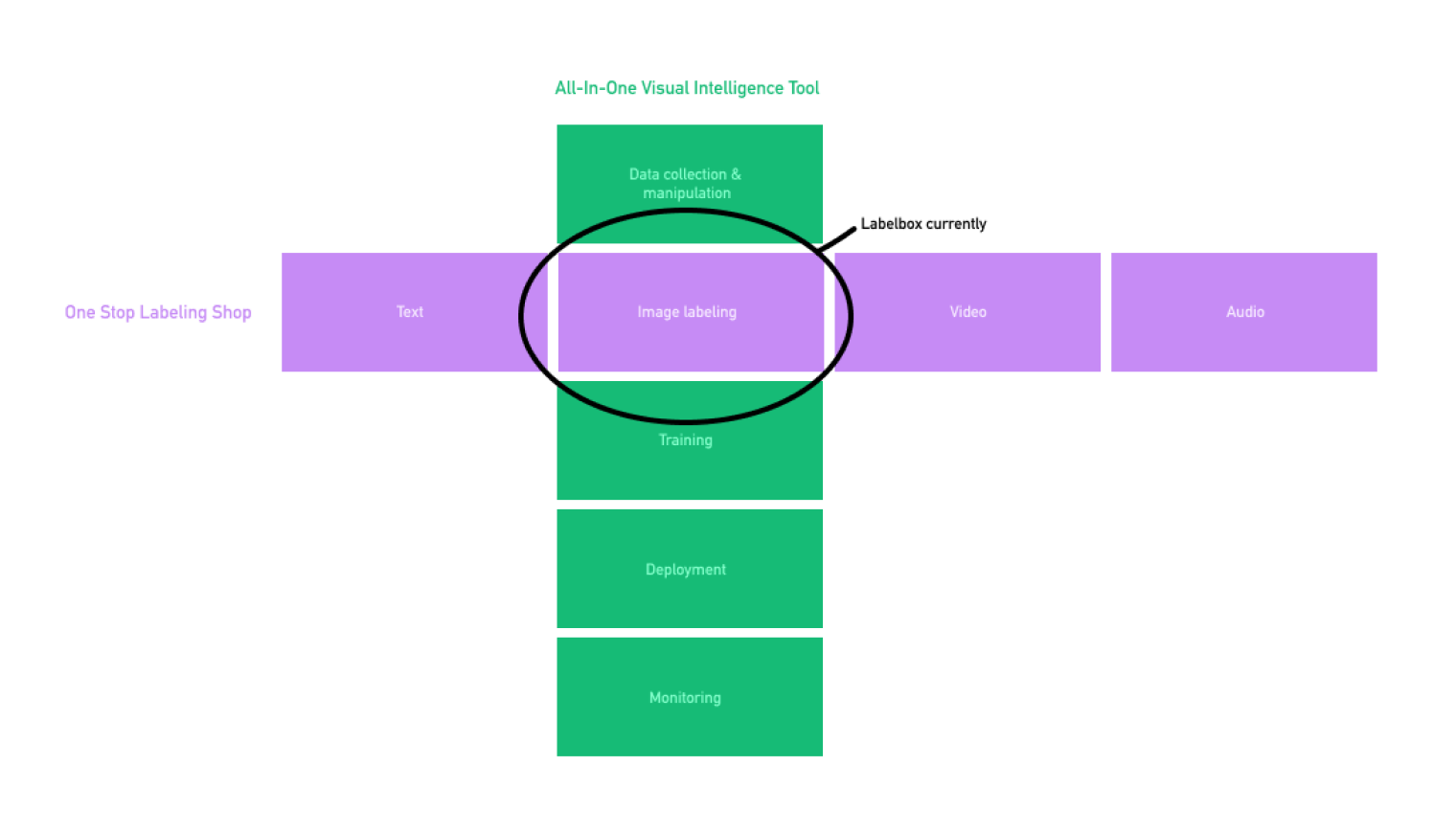

The most pressing strategic question was whether to expand horizontally or vertically. At the time, Labelbox only offered image labeling, and customers wanted to label all kinds of data: Text, video, audio, 3D, medical imagery, etc. Customers were also looking for a more holistic training data solution: Better data management and labeling integrated with model training and deployment. Could (and should) Labelbox support all of it?

To answer this question and create a strategy for the next 12-18 months, we did a 6-week project that included customer visits, competitive analysis, expert interviews, and surveying labeling firms. We followed the strategy process outlined in Playing to Win and eventually landed in a half-pager as the heart of our strategy.

We decided that the best option was to double down on image labeling and capture that market by earning developers’ love. At the time, most of the burgeoning labeling industry focused on the self-driving cars segment, so we decided to go after “everything else”.

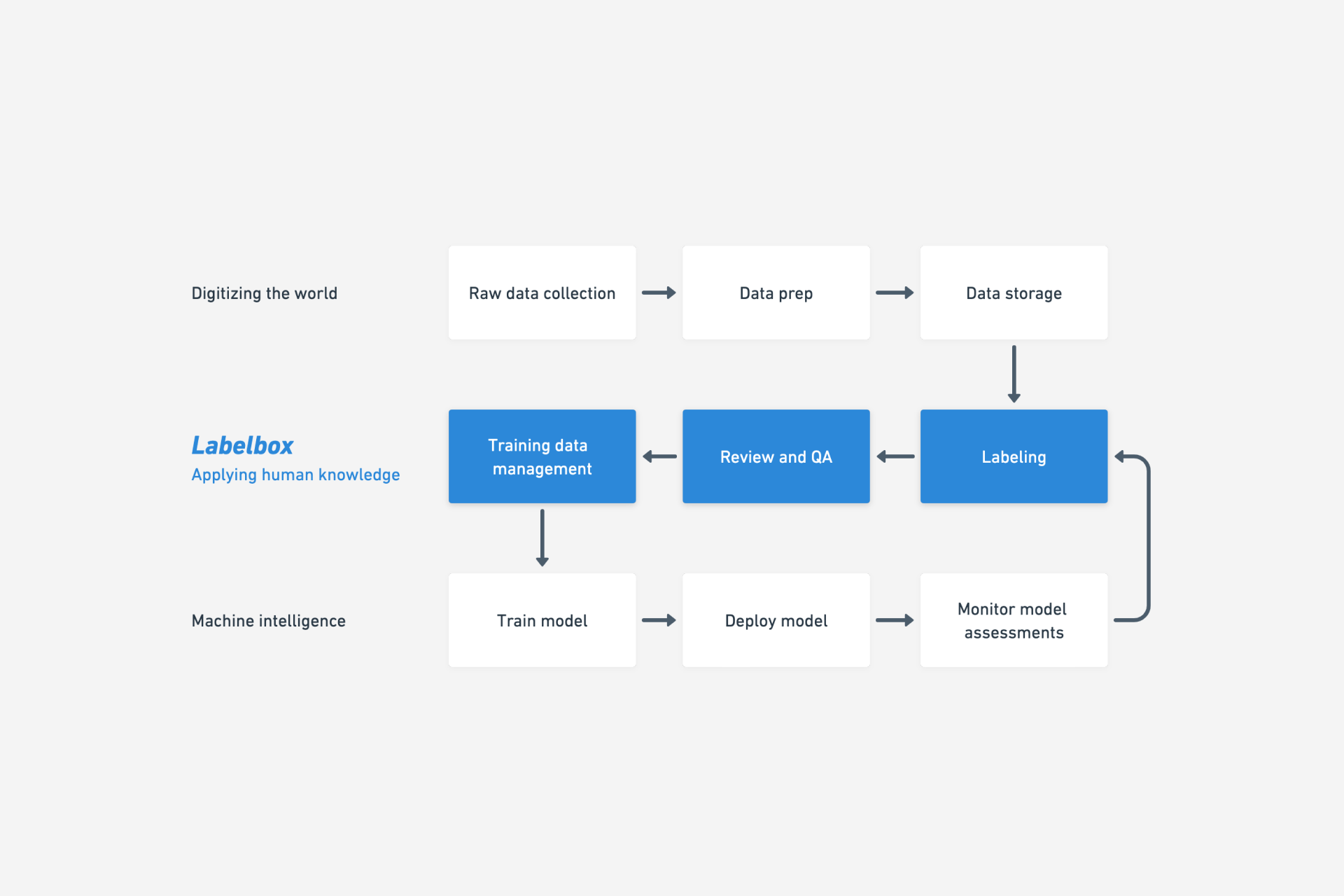

Labelbox position in the machine learning workflow 2018/2019

A new label editor

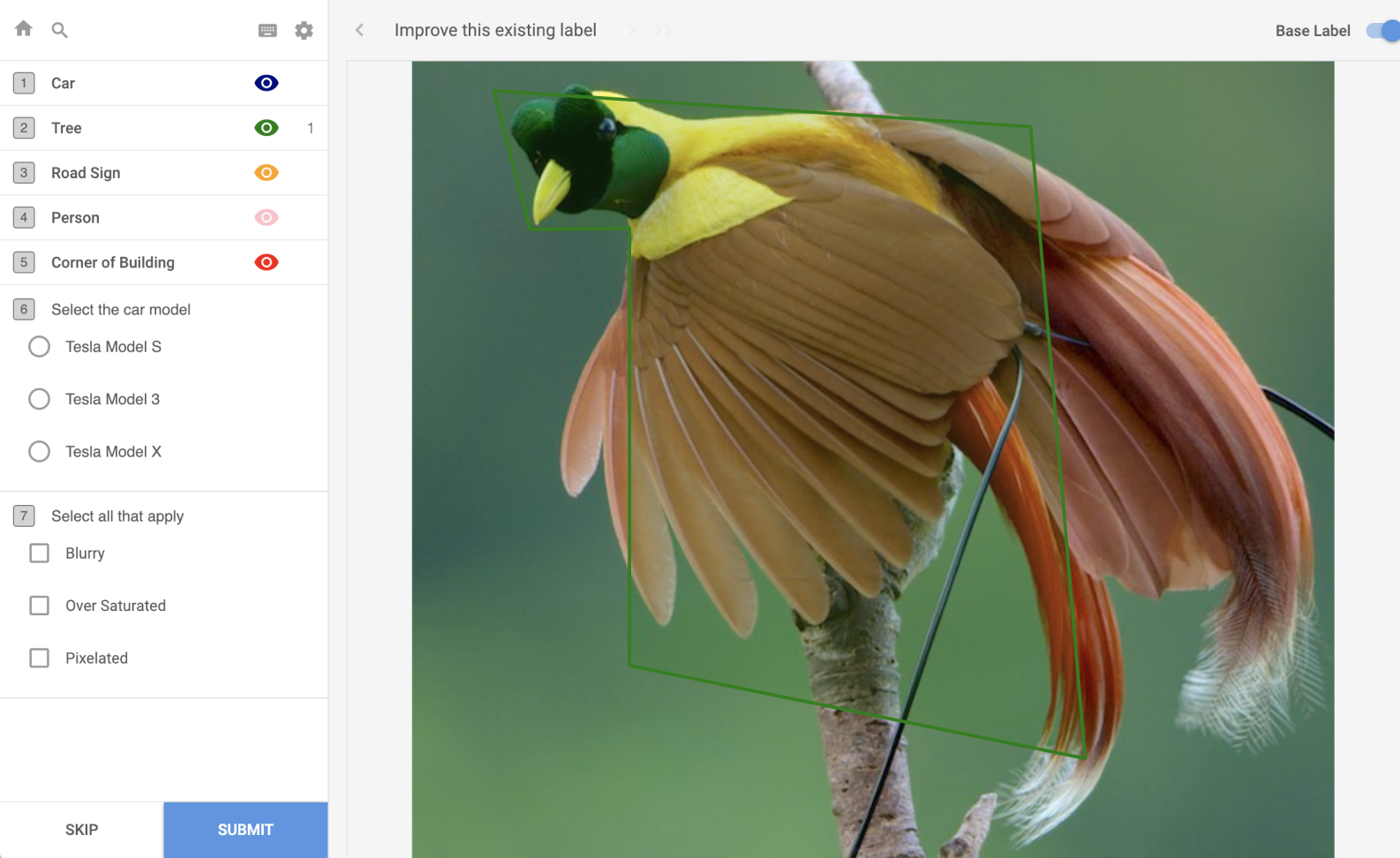

After getting the team aligned around the new strategy, our highest priority was to develop the next version of the label editor, the core component of Labelbox. The existing label editor lacked support for important computer vision use cases and needed a better foundation as we wanted to expand into other data types later.

Research

We went deep with the super users of Labelbox, the labelers. Most of them were in India, so we flew to New Delhi to study how they used the editor and what could be improved.

Interviewing the team in India and observing how they used Labelbox for eight hours per day was a revelation. I also got to do a couple of long labeling sessions to experience it first-hand.

Insights like how the team dimmed the ceiling lights to make some labeling tasks easier could only be gleaned by being there in person. Minor annoyances in the UI become major ergonomic issues when you experience them hundreds if not thousands of times a day.

As part of the research, we also interviewed professional retouchers who showed us how high-end masking and image manipulation workflows are done in Photoshop using the state of the art tools.

Building the editor

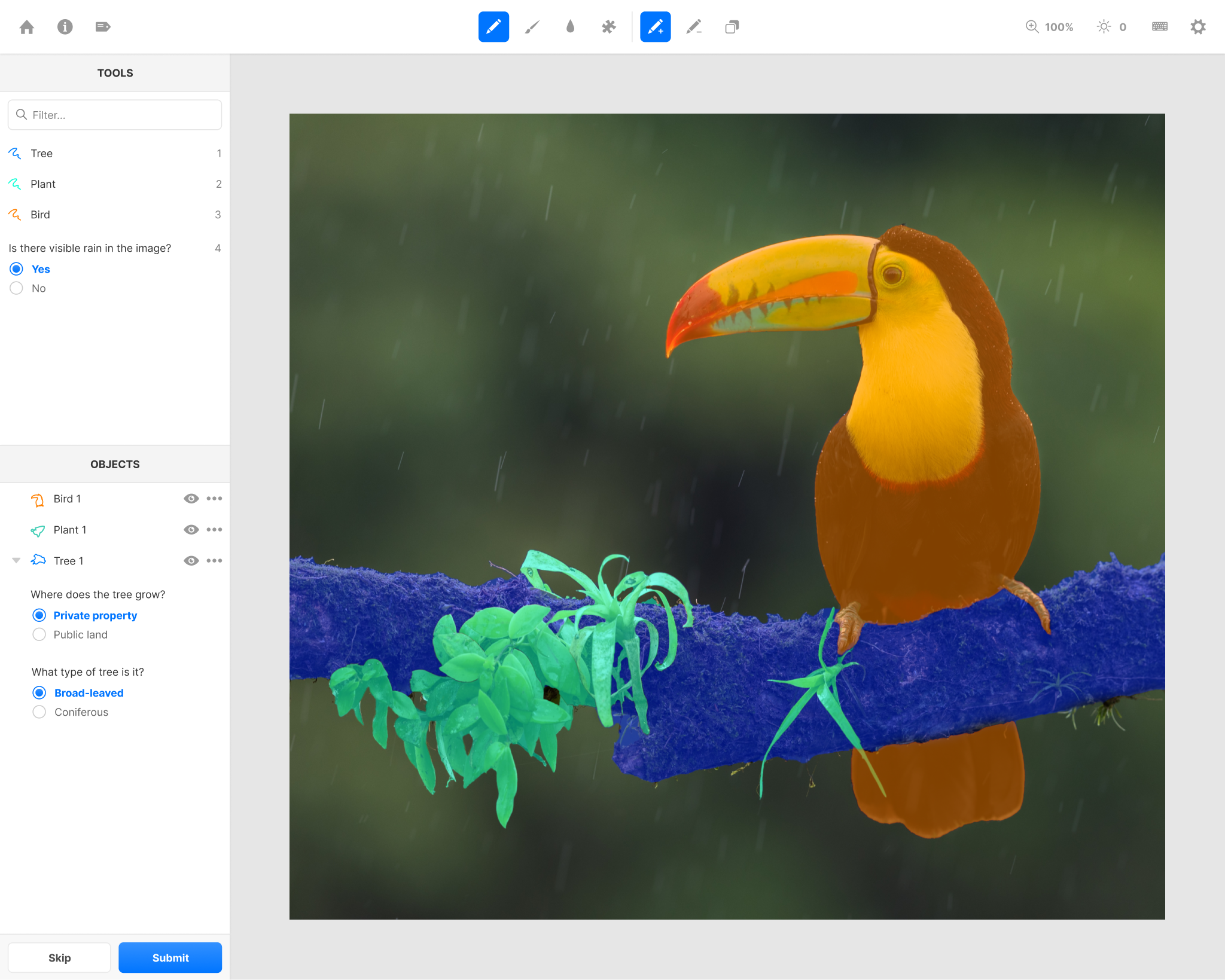

Before: Editor v1

After: Editor v2

Armed with these insights, we set the requirements for the new editor:

- Support semantic segmentation

- Support object instances (i.e. bird 1 and bird 2 can be differentiated)

- Vastly improve performance

- Unify all computer vision use cases within one interface

Pen tool with the "draw to back" modifier to create shared borders

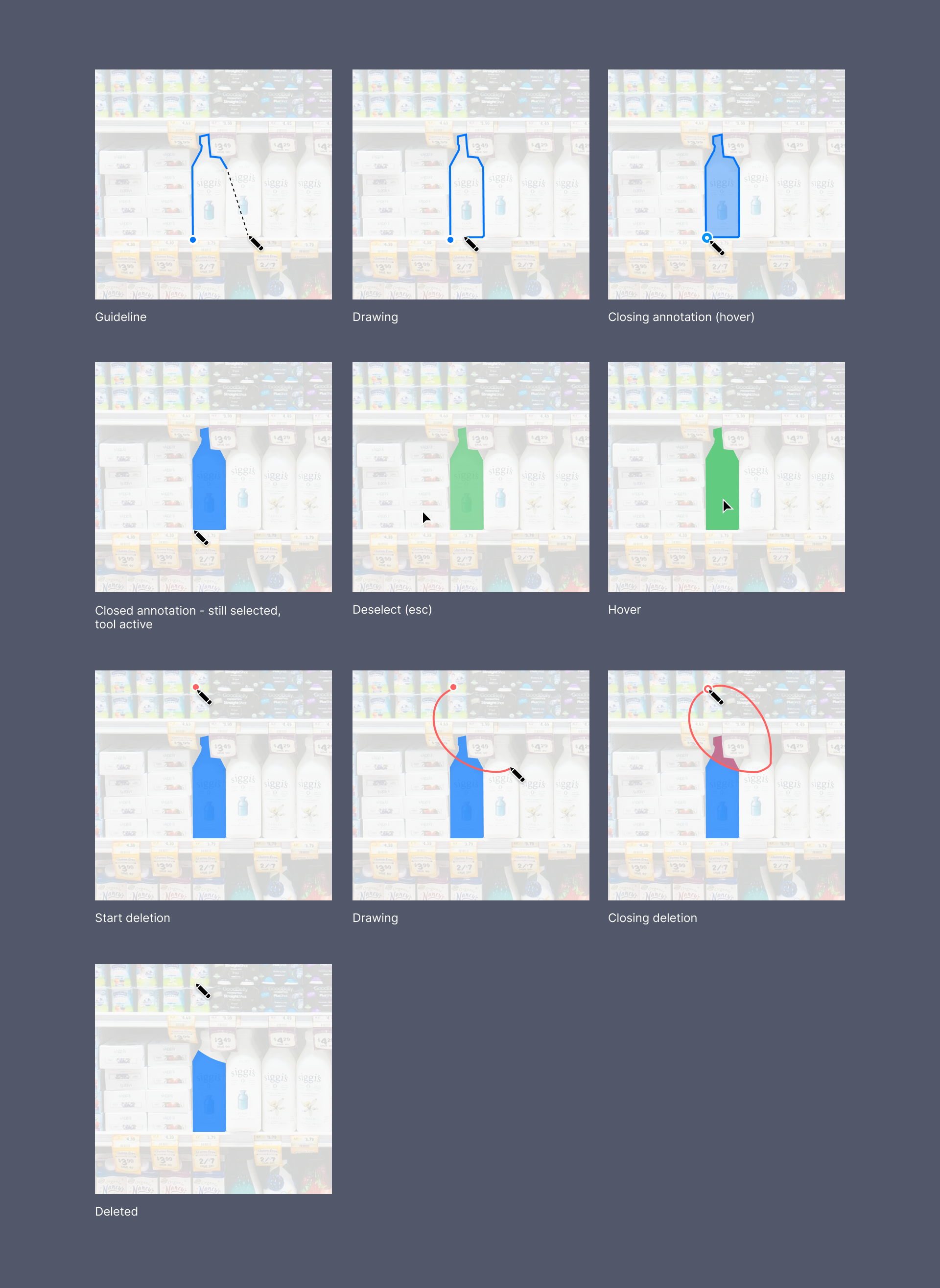

We decided we should build a great manual masking/labeling tools before we ventured into machine-assisted labeling tools. Magic tools could be handy, but labelers often had to fall back to manual tools to fix errors, resulting in slower labeling times. So, we prototyped a new pen tool specifically designed to create outlines.

Pen tool interactions

The pen tool works like a mix between Photoshop’s pen and pencil tool, letting you click out points on a continuous line and also draw free hand. Many labeling tasks switch between outlining geometric shapes and organic shapes so you need something that can be exact for both cases.

Labeling is error prone so by holding alt/option the pen goes into deletion mode and you can easily correct a sloppy segment.

We also added features like undo and quick panning, features that are table stakes in graphic editors like Photoshop/Figma but was not common in labeling tools at the time.

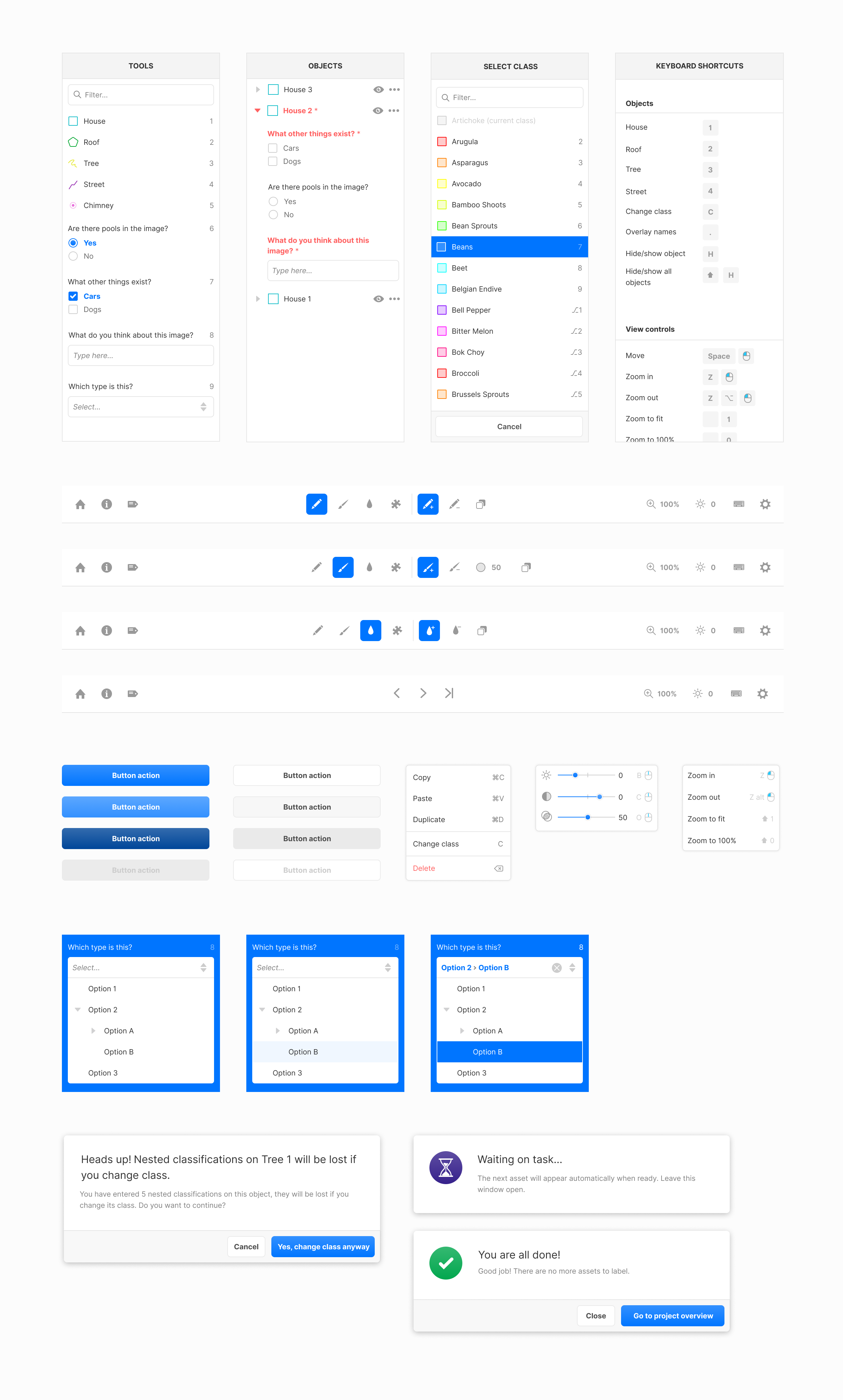

The low resolution monitors that labelers were using was the biggest constraint on the layout of the editor. Having more than one sidepanel open at any point was not an option. Some complex tasks also require hundreds of tools and objects, so space is at a premium.

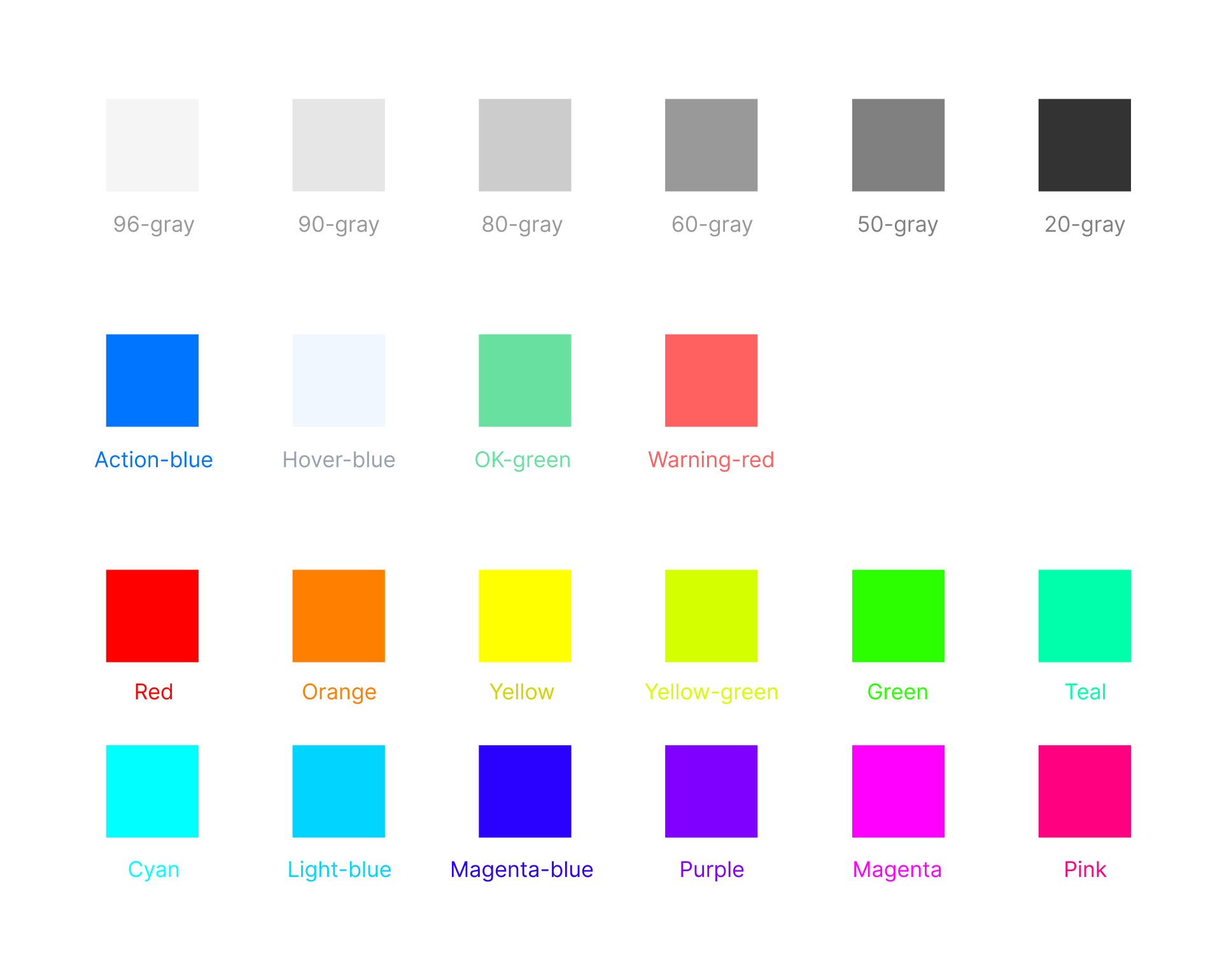

Editor components

Color swatches

The new editor launched in July 2019 and we hit the goal of having 80% of new projects use it within 3 months. It also hit its revenue target, driving $75k of net new sales within the first 2 months. It allowed us to deprecate the old editor and move all computer vision use cases to the same tech foundation.